Update 8/20/17: 3DR Holdings won their case against Just 3D Print. The decision has what I would consider to be the only actual written consideration of this matter by a court (thus far…). That court found that there was no defamation because 1) 3DR’s post was not defamatory, and 2) even if they were defamatory, Just 3D Print failed to prove that 3DR’s actions were in any way related to any harm experienced by Just 3D Print. The court did not need to determine if any copyright infringement actually occurred in the underlying dispute in order to reach this conclusion.

Surprisingly enough, this is the third post in what I suppose now qualifies as the saga of Just 3D Print downloading hundreds of model files from Thingiverse and selling the prints on eBay (previous posts here and here).

This post is occasioned by a recent defamation case brought by Just 3D Print against Stratasys in relation to Stratasys’ reporting on the saga. The purpose of this post is to explain what happened in that and related cases, as well as to review the new tweaks in the underlying copyright claims. All of the disclaimers and facts from the earlier posts apply, especially that this is not legal advice of any kind and that I am not an expert in Pennsylvania civil procedure or defamation law. This is just my take on a situation that I believe has an impact on how we think about the combination of 3D printing, copyright, and Creative Commons licensing.

A Short Bit of Background

The original post lays out the history so far, but let me give a quick summary of how we got here.

As you may recall, this incident began when Thingiverse user loubie raised concerns about the behavior of a company called Just 3D Print. Loubie accused Just 3D Print of pulling around 2,000 models and associated images from Thingiverse to sell in an eBay store. Just 3D Print was allegedly doing this without permission of the designers and, in at least some cases, in violation of the terms of various CC licenses on the models. Just 3D Print responded with a litany of excuses and justifications (listed and discussed here) essentially claiming that its behavior did not violate US copyright law. Various designers contacted eBay to accuse Just 3D Print of infringement and the shop was eventually removed.

That would have been the end of it, except Just 3D Print then sued three publications that covered the drama for defamation.

Just 3D Print lost one case, one case is

pending (edit 8/20/17: see update above), and won one case against Stratasys. Stratasys is a 3D printer company not a publication, but the suit was related to articles published on the Stratasys blog.

After the Stratasys win, Just 3D Print reached out to a number of outlets that had covered the original incident (“outlets” being broadly defined as it includes this blog) asking for a disclaimer on the original articles noting that the court had found that Just 3D Print had not infringed any copyrights.

This struck me as strange because, assuming the public facts available were generally correct, it appeared that Just 3D Print probably did infringe on copyrights. It also seemed strange that the parties involved would have litigated an entire copyright infringement lawsuit.

Intrigued, I decided to investigate further. At the end of my investigations I have come to two conclusions. First, that Just 3D Print’s view of how copyright applies to 3D printing files and objects (and just operates generally) continues to be incorrect. Second, that it is unreasonable to claim that the court in the Stratasys case came to any conclusions regarding copyright infringement or, for that matter, defamation.

The Defamation

Suits

Before we get to the suits, the Digital Media Law Project has a nice background on defamation law and on Pennsylvania defamation law specifically (the cases were brought in Pennsylvania courts). When thinking about defamation cases, it is important to look at the actual words used by the speaker and to remember that, generally speaking, the substantial truth of those statements is a defense against those claims. It is also worth remembering that stating opinions (as opposed to facts) is generally protected by the First Amendment, making it much less likely that they will be considered defamatory (more here).

In its complaints (against Techcrunch, Stratasys, and 3DR), Just 3D Print claimed that the articles resulted in a torrent of hate mail, wasted hours responding to press, and a harm to Just 3D Print’s reputation (note that if these were the result of legitimate, fact-based criticism, it is highly unlikely that these harms could form the basis for a defamation claim. In other words, it is only a problem if those harms flow from defamatory conduct).

Perhaps most impressively, Just 3D Print claimed that the defamatory articles by TechCrunch and Stratasys caused it to shut down a product line projected to generate $2,000,000 a month in gross profits within one or two years. In its claim against 3DR Holdings (publisher of the trade publications 3Dprint.com and 3Dprintingindustry.com), this claim is restated as projected revenue of $100,000,000.00.

The claim against

3DR has not been resolved yet (edit 87/20/17: it was resolved in 3DR’s favor - see update above), so I’m going to put that aside. If

you are curious, I’ve posted some relevant documents here.

Techcrunch defeated the claim Just 3D Print brought against it. The decision gives two reasons. First, that the statements made by Techcrunch were opinions that did not qualify as defamation. Second, that there was a statute of limitations issue.

The Techcrunch result is what I would have expected. The media outlets were reporting on a public controversy involving Just 3D Print. In most contexts, that kind of reporting would not qualify as defamation. The courts did not have to spend anytime on the facts of the underlying copyright infringement claims to reach their conclusion. The nature of the reporting itself meant that the inquiry could stop there.

The Stratasys Case

All of which made the Stratasys case so confusing. Stratasys lost the case, but the final disposition does not include any sort of explanation of why the court found against Stratasys. It just found for Just 3D Print.

Intriguingly, the time stamp at the top of the document suggests that the hearing started at 10:55 am and ended at 11:14 am. Presumably a conclusion that Stratasys defamed Just 3D Print would require the court to examine the underlying copyright infringement claims and the intent of Stratasys in publishing the piece. After all, Just 3D Print was representing this decision as a vindication against accusations of copyright infringement. How could a court get through all of that in 19 minutes? I’m two and a half blog posts in and I haven’t managed to get everything straight.

Fortunately, transcripts of the oral augments are available from the court.* As is so often the case, the transcripts answer some questions and raise others.

The argument was short, so I encourage you to take a moment to read them yourself (I mean, you’ve already at least skimmed this much of an article about all of this stuff). I think it is fair to say that they are not a model of judicial clarity or efficiency.

The entire discussion appears to focus on two questions. First, the relationship between the person who wrote the article posted on the Stratasys website and Stratasys itself. Second, the necessity of Stratasys, as opposed to simply a lawyer representing Stratasys, to be present in the courtroom.

Good eye if you noticed that neither of these questions directly relate to the underlying allegation of copyright infringement or the defamatory nature of the articles about those allegations.

Unfortunately, the court does not really even address either of these preliminary questions. Instead, apparently frustrated by the discussion of those questions up to that point, 19 minutes in the court summarily cuts off the proceedings and enters a judgment for Just 3D Print. No real consideration of who published the article. No real consideration of who needs to be in the courtroom. Absolutely no consideration of infringement or defamation.

The attorney for Stratasys responds to all of this by saying “This is unbelievable” and I’m inclined to agree. The most charitable reading may be that the court found for Just 3D Print on the procedural grounds that Stratasys failed to appear. It does not seem reasonable to represent that the court came to a reasoned conclusion on either the defamation claims or the underlying infringement claims.

I’ve asked Stratasys if they plan to appeal this decision and will update this post if I get any additional information.

Was There Even Infringement?

As I mentioned earlier, this post exists because Just 3D Print reached out to claim that the court had found that Stratasys had defamed them and that the court had found them innocent of copyright infringement. For the reasons I just explained, this strikes me as at least an over reading of the decision, if not an outright mischaracterization.

More concerning to me was that, when asked for clarification, Just 3D Print continued to argue that no infringement could have occurred. Many of the reasons they put forward were identical to the ones that surfaced during the original conflict. I’m not in a position to know if this continued reliance on incorrect readings of copyright law is intentional or merely indicative of a lack of understanding. In the interest of brevity (ha!), I’ll just refer to the earlier post on this issue that explains why I believe these claims are incorrect, specifically claim #3 that uploading a model to Thingiverse under a Creative Commons license somehow abandons the creator’s copyright, and claim #8 that copyright registration is required for copyright protection to exist and that infringement cannot happen absent that registration.

Just to clarify, uploading a model to Thingiverse under a Creative Commons license does not abandon your copyright interest in that model. And you do not need to register your copyright in order to get copyright protection. In fact, your unregistered copyright can be infringed upon.

Was There

Ever an Accusation of Infringement?

There is one final argument that Just 3D Print made in the course of our discussion: that Just 3D Print had never been accused of copyright infringement, and that no copyright infringement has ever been proven.

At least parts of this claim are demonstrably false. The Thingiverse posting that kicked this entire saga off alleged facts that would constitute infringement. The comments on that post contains other explicit accusations of infringement.

Furthermore, the exhibits provided to me by Just 3D Print contain over 300 allegations of infringement that were filed against the Just 3D Print eBay shop. eBay’s ”VERO” process is DMCA complaint which means that, in accordance with US copyright law, it requires allegations of infringement to be backed up by a statement under penalty of perjury that the claims are accurate. The claims sworn to include “I have a good faith belief that the use of the material in the manner complained of above is not authorized by the Intellectual Property Owner, its agent, or the law.” In other words, there are over 300 instances of Just 3D Print being accused of infringement. These accusations complied with US copyright law and required the accusers to stand by their accusations under penalty of perjury.

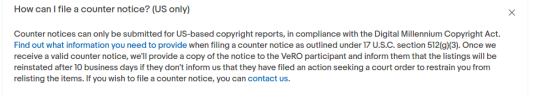

eBay’s VERO/DMCA process allows an eBay shop owner who is accused of infringement to submit a counternotice and have the targeted listing reinstated. However, Just 3D Print did not contest a single accusation of infringement and instead allowed the listings to be removed. It is important to point out that the failure to respond to a DMCA takedown notice does not meant that the original accusation was correct. There are many structural reasons that make it hard or intimidating to respond to a DMCA notice even if it is incorrect.

When pressed on this failure to respond – a failure that ultimately resulted in the shutting of the eBay shop and apparently contributed to the loss of millions of dollars of revenue – Just 3D Print replied that the eBay “contact us” phone number simply connected them with a “random person that says they will pass on the info.” Regardless, the eBay VERO/DMCA page provides explicit instructions on how to challenge an accusation of infringement with a link to the form (no phone call required):

With tens of millions of dollars allegedly on the line, it is unclear why Just 3D Print failed to challenge a single accusation of infringement via the form provided by eBay. This is all the more confusing because Just 3D Print does not appear to have a general aversion to engaging with legal processes.

Just 3D Print’s second response to the accusations is that it does not consider any of the accusations to be valid because there is no evidence that the individuals reporting infringement had registered their copyright prior to making the accusation. As noted above, this is not a requirement for copyright infringement. Furthermore, if this was Just 3D Print’s position, it could have been communicated to eBay and its accusers via the eBay process.

None of this constitutes a judicially arrived-at decision that Just 3D Print infringed on any copyrights. But, at a minimum, it certainly constitutes allegations of infringement in my book.

Why Does This Matter?

Is this post anything more than responding to someone being wrong on the internet?

I hope so. As I’ve noted in the earlier posts regarding the dispute, we are still in a formative time in the context of 3D printing, copyright, and Creative Commons licensing. While there is some ambiguity, there isn’t total ambiguity. Throwing settled areas of copyright law into question does not help work towards a solution for the complicated stuff. When there are legitimate disagreements about the intersection of 3D printing, copyright, and Creative Commons licenses, they are important to explore. But if someone is bringing dubious claims into a discussion it is important to identify them as such as quickly as possible.

As always, I’ll update these and other posts as more information becomes available.

*Bonus: How do you get all of the legal documents?

It’s both harder than it should be and in fact not so hard. The important thing to remember when looking up legal documents (and this is true at all levels) is that court document systems are not sophisticated. Generally speaking, for every court it seems there was a moment in the late 1990s or early 2000s where someone decided that things should be available online. At that point some contractor spun up an online portal, and that portal has never been updated since. So when you are searching courts for information just pretend it is 2002 and proceed accordingly.

For the court in this case, that means going to the public portal and logging in as a public user. You then need something pretty specific (a case number, a party name) to find documents. For the Stratasys case that number is SC-17-02-24-6077. If you can get a thread, you’ll end up at a docket page. Even small cases will have a number of documents, because this page captures everything that happens procedurally in the case. Fortunately the names are at least semi-descriptive, so you can be reasonably sure something called “Judgment” will be a judgment.

There is one thing that is obviously missing from the docket page, and that is the transcript. For that I just called up the court clerk to ask for instructions. Courts know that their websites aren’t amazing and court clerks are generally pretty friendly. In this case they happily told me how much the transcript would cost, where to send the check, and what else to include in the envelope. A few weeks later I had the transcript in my inbox.

Sad face image courtesy of Loubie.

Read More...