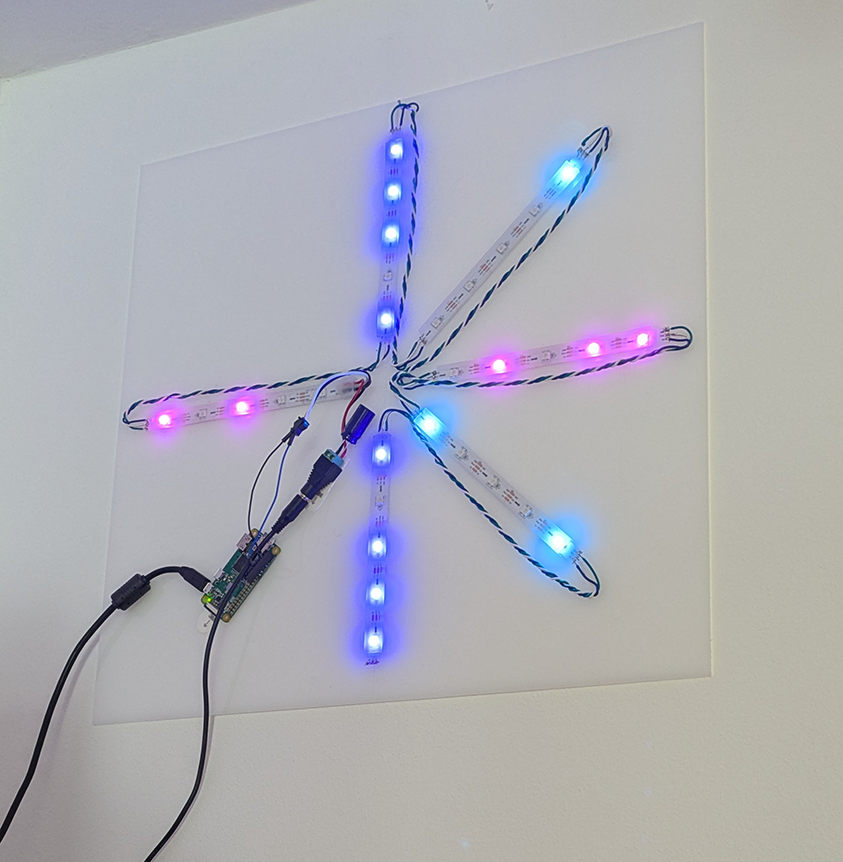

Moving from NYC to Berlin gave me an excuse to update my old Pi-Powered MTA Subway Alerts project for the BVG. Now, as then, the goal of the project is to answer the question “if I leave my house now, how long will I have to wait for my subway train?”. Although, in this case, instead of just answering that question about the subway train, it also answers it for trams.

Read More...New Open GLAM Toolkit & Open GLAM Survey from the GLAM-E Lab

This post originally appeared on the Engelberg Center blog

Read More...What Does an Open Source Hardware Company Owe The Community When it Walks Away?

This week Prusa Research, once one of the most prominent commercial members of the open source hardware community, announced its latest 3D printer. The printer is decidedly not open source.

Read More...Keep 3D Printers Unlocked (the win! 2023)

Last summer I submitted a request that the Copyright Office renew an existing rule that allows users to break DRM that prevents them from using materials of their choice in 3D printers. As of October 28th, that rule has been renewed for another three years.

Read More...Licensing Deals Between AI Companies and Large Publishers are Probably Bad

Licensing deals between AI companies and large publishers may be bad for pretty much everyone, especially everyone who does not directly receive a check from them.

Read More...